Claude for developers: beyond code generation

Code generation was never the real bottleneck. Claude for developers excels at code review, architecture discussions, and debugging conversations. Teams report 164% productivity gains from these collaborative thinking tasks, not from typing faster, but from thinking more deeply about system design.

What you will learn

- Code review beats code generation - Claude excels at understanding and analyzing code rather than just writing it, catching bugs humans miss and giving detailed architectural feedback

- Architecture discussions change planning - Extended thinking mode enables deep reasoning about design patterns, trade-offs, and system complexity before writing a single line

- Productivity doubles through review workflows - Teams report 164% improvement in output by shifting from generation to collaborative review and debugging conversations

- Different tool than Copilot - Where Copilot speeds up typing with autocomplete, Claude makes you think better about architecture, security, and long-term maintainability

Our dev team stopped asking Claude to write code about three months ago.

Now we use it for code review, architecture planning, and debugging conversations. Productivity doubled. Generation was never the point.

I’ve watched companies treat Claude for developers like an autocomplete tool when it’s actually closer to having a senior architect who never sleeps. The difference matters. A lot.

What developers actually need from AI

After watching our team work with Claude Code for a quarter, a pattern became obvious. They spend most of their time reviewing code, not writing it. Debugging weird behaviors. Discussing trade-offs between architectural approaches. Planning how systems should fit together.

Claude Sonnet 4.5 became the default model with Claude Code 2.0 in late 2025, bringing 77.2% accuracy on SWE-bench and the ability to run for 30+ hours on complex tasks without losing coherence.

Writing code is maybe 30% of the work. The rest is thinking. Understanding the different Claude modes helps you pick the right tool for each type of work.

Traditional code assistants optimize that 30%. They make you type faster, suggest completions, generate boilerplate. All useful. But they miss the 70% where developers actually create value.

One solo developer reported story point completion jumping from 14 to 37 points weekly, a 164% improvement. Time spent resolving bugs dropped by 60%. Not because Claude wrote more code. Because it helped him think through problems before coding.

Turns out, that shift from writing to thinking is what separates Claude from every other coding assistant.

Architecture first, implementation second

Extended thinking mode changed how teams approach complex problems. Claude pauses to generate reasoning steps you can actually inspect. It works through architectural trade-offs before suggesting solutions, not after.

Our backend team needed to redesign how we handle workflow state transitions. Complex problem. Multiple valid approaches. Real implications for performance, maintainability, and future flexibility.

They started a conversation with Claude in plan mode. Not asking it to write code. Asking it to explore the problem space first.

Claude analyzed the existing codebase, mapped dependencies, identified bottlenecks, compared three architectural patterns, and laid out trade-offs for each approach. All before suggesting a single implementation detail. The conversation felt like working with a senior architect who had just spent two days studying your entire codebase. Claude Code’s plan mode creates a read-only environment where it explores patterns and formulates strategies without touching files.

Claude vs Copilot - key difference

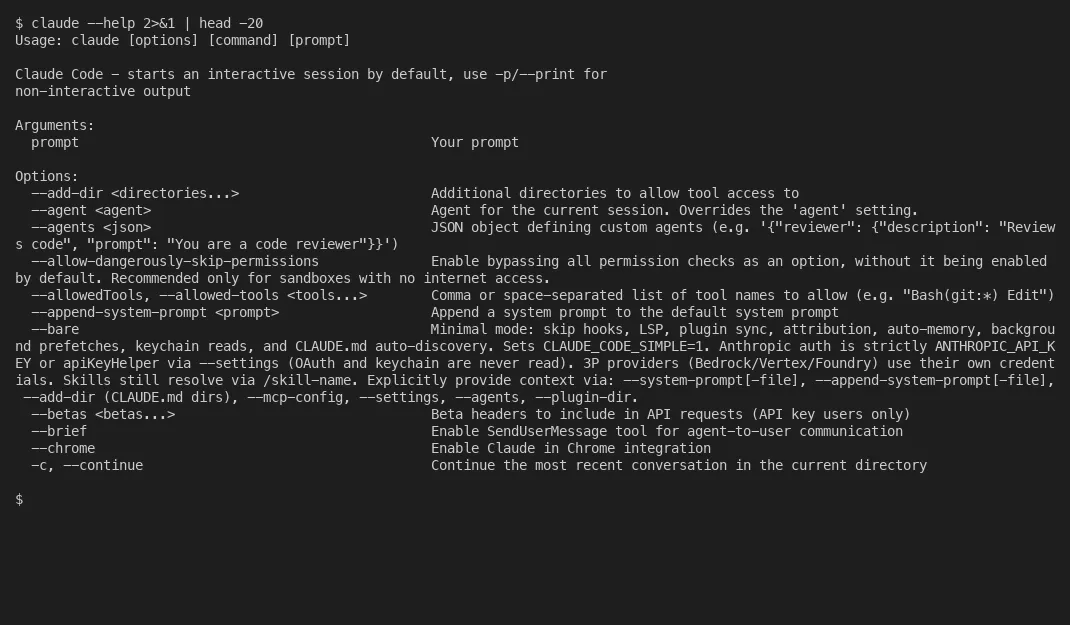

Copilot excels at inline code completion within your IDE, speeding up typing. Claude Code runs in your terminal with a 200,000-token context window, understanding your entire codebase to help with architecture planning, multi-file refactoring, and complex reasoning tasks that span hours.

This is radically different from autocomplete. It thinks with you, not just for you.

Ann Jose described it well: “If Copilot is your pair programmer, Claude is your senior architect.”

If you want to apply this thinking to your firm, Blue Sheen handles work like this.

Where code review gets interesting

Claude finds bugs humans miss. Not syntax errors. Logic errors. Security issues. Architectural problems that surface months later.

Anthropic’s security review feature scans pull requests for security vulnerabilities using deep semantic analysis. It examines code changes for common vulnerability patterns, checks dependency risks, and flags logic flaws that static analyzers typically miss.

Our team caught three major security issues in a recent sprint. All found during Claude’s review. All missed during human review. That’s not a small thing.

Claude Code 2.0 reduced code editing error rates from 9% to near zero in internal testing, with new checkpoint features letting you save and rollback states for risk-free experimentation. Not bad for a code tool.

Why does Claude work better for review than generation? Understanding matters more than writing. When generating code, Claude has to guess at context, intent, and constraints. When reviewing code, all three are explicit. The code exists. The intent is documented. The constraints are visible. Claude can focus on finding problems.

Pattern recognition across codebases. Consistency checking. Security analysis. Performance review. These require understanding entire systems, not just completing the next line. Real developer feedback confirms this. Teams use Claude for semantic analysis, moving past superficial syntax checks into actual logic verification. The same review muscle pairs naturally with AI test generation for the spots where review surfaces missing coverage.

The debugging conversation pattern

Here’s where it gets interesting. Debugging with Claude feels like working with someone who has infinite patience and perfect memory.

You describe the problem. Claude asks clarifying questions. You share error logs. Claude forms hypotheses. You test them. Claude refines based on results. The conversation builds.

This back-and-forth works because Claude maintains context across the entire exchange. It remembers what you tried 20 messages ago. It connects patterns between this bug and architectural decisions from earlier in the session.

One developer on our team spent three days tracking down a race condition. Finally asked Claude. Solved in 40 minutes.

Not because Claude magically knew the answer. Because it could hold the entire problem space in working memory while systematically eliminating possibilities. Humans lose track after the fifth hypothesis. Claude doesn’t.

Extended thinking helps here too. For complex debugging, Claude Opus 4.5 can spend minutes reasoning through possibilities before responding, with a configurable effort parameter that lets you trade response thoroughness for token efficiency. The hybrid architecture switches between quick responses and deep analysis based on problem complexity.

How this actually changes team output

The productivity gains come from eliminating context switching, not from typing faster.

A developer working on a feature used to switch between writing code, reviewing documentation, checking existing implementations, and asking team members about architectural decisions. Each switch costs 15-20 minutes to rebuild context. That’s a painful tax.

Now they have a single conversation with Claude that spans all those contexts. Architecture discussion flows into implementation flows into testing strategy flows into documentation. One continuous thread.

Multiple teams report similar patterns. One staff engineer described hitting 2-3x faster feature development after integrating Claude into his daily workflow. With Sonnet 4.5 capable of running extended sessions on complex tasks without losing coherence, developers can maintain continuous context across entire feature implementations. The flip side is tool selection - the Claude vs Cursor for enterprise and comparison with Amazon Q breakdowns walk through which tool fits which team shape.

Less time context switching, more time in flow state. Less time searching for examples, more time discussing trade-offs. Less time debugging in isolation, more time having productive conversations that actually go somewhere.

GitLab reported notable efficiency gains after integrating Claude into development workflows, and Sourcegraph saw similar improvements in code search and review speed across their engineering teams.

But I think what matters more than the percentages is this: developers report being better at their jobs, not just faster. They understand systems more deeply. They make better architectural decisions. They catch problems earlier. That’s a different kind of value. Will this replace developers? No.

The teams getting this right treat Claude like a thinking partner, not a code generator. They ask it to review, discuss, analyze, and explain. They use it to explore problem spaces before committing to solutions. They’ve figured out that code generation was never the bottleneck.

Thinking was.

About the Author

Amit Kothari is an experienced consultant, advisor, coach, and educator specializing in AI and operations for executives and their companies. With 25+ years of experience, he is the Co-Founder & CEO of Tallyfy® (raised $3.6m, the Workflow Made Easy® platform) and Partner at Blue Sheen, an AI advisory firm for mid-size companies. He helps companies identify, plan, and implement practical AI solutions that actually work. Originally British and now based in St. Louis, MO, Amit combines deep technical expertise with real-world business understanding. Read Amit's full bio →

Disclaimer: The content in this article represents personal opinions based on extensive research and practical experience. While every effort has been made to ensure accuracy through data analysis and source verification, this should not be considered professional advice. Always consult with qualified professionals for decisions specific to your situation.